VEI.space

07 Aug 2017 >> Teach Something

So, I saw a curious sight today at work. I saw a colleague with a PowerShell window open, and an Excel spreadsheet - with the contents of said spreadsheet being something like this.

| Account | First Name | Last Name | Command | | —- | —- | —- | —- | | example1@contoso.com | Example | User | Get-ADUser example1@contoso.com | Set-ADUser -DisplayName “Example User” | | example2@contoso.com | Example2 | User2 | Get-ADUser example2@contoso.com | Set-ADUser -DisplayName “Example2 User2” | ….

And so on, for a few hundred rows - with the contents of that last column of course all being generated with the CONCATENATE function. And first name and last name being derived manually.

In this, I recognized something I’d done in earlier life - Excel is not the thing you’re meant to do this kind of thing in, but it was what I was taught in school, and it was “easy”. (I am not sure what modern kids are being taught in school, but I’m pretty sure it leans more towards the “developer” side than the “ops” side). Excel is a tool, just like PowerShell is - and sometimes, it’s about the tool you know.

Now, the code they had written in the command column wouldn’t work (they had

-LDAPFilteron both the Get- and Set- side of the equation for starters), so I took five minutes and wrote up a simple script. This isn’t necessarily the shortest way of writing this code (or the best performing, or the one I’d necessarily use - I’d use something shorter if I was writing it at the console, or something which tests against the fail state below if it was to be part of a longer script), but being simple to understand was more important in this case.Import-Module ActiveDirectory #Even though this isn't necessary on newer versions of PowerShell, it is best practice. $users = Get-Content users.txt foreach ($user in $users) { $ADObject = Get-ADUser -Filter {UserPrincipalName -eq $user} $First = $ADObject.GivenName $Last = $ADObject.Surname $ADObject | Set-ADUser -DisplayName "$first $last" }(That first

$ADObjectline is the third revision; the first one triedGet-ADUser $user, the second was the same as the third but with"$user"instead which apparently PowerShell v4 doesn’t like).Now, of course, I didn’t include the -PassThru parameter, so no output. So we checked a few manually, and they seemed fine, but my colleague pointed out the potential fail state of a user not having the GivenName or Surname filled in and so getting a display name of “ “. So I wrote a quick one liner so we could see everything:

$users | foreach {Get-ADUser -filter {UserPrincipalName -eq $_} -Properties DisplayName | select DisplayName,GivenName,Surname,UserPrincipalName}And sure enough, there were about 5 or 6 that hadn’t had the name filled in, and they did that manually. Hours of busywork for the apprentice, reduced to half an hour or less - and crucialy, it got them interested in learning more about PowerShell.

The moral of this story is a pretty simple one - you don’t need to be the absolute best at a topic to go ahead and teach someone something - especially if it’s going to save them a whole lot of time and effort in the long run.

14 May 2017 >> Rhapsody in ETERNALBLUE

So, by now, I probably don’t need to tell you about the wcry ransomware attack currently running rampant through the IT systems of Europe (and possibly/probably beyond). Spread with the help of MS17-010 - also known as ETERNALBLUE.

In this specific case, I wish to look at what someone in my position at my previous employer could have done. I was helpdesk, not responsible for overall network design or hardening - so there are some changes that would never have been implemented had I suggested them, like enabling Windows Firewall on domain networks - and there are things we had in place that would have helped limit damage, like a fairly robust backup solution for most systems. But still, there are things that I could have done.

A Little Introduction

First, we must have a little introduction to the exploit - just to establish some grounding.

ETERNALBLUE is an exploit in version 1 of the Server Message Block protocol that provides remote code execution capability.

What’s the Server Message Block protocol? (Hereafter referred to as SMB for brevity). It controls how Windows (and compatible clients, such as Samba for Linux hosts) handle file sharing. If you have a shared network drive hosted by Windows Server, you’re most likely using SMB. It is enabled by default on all modern (and most not so modern) Windows installations. Version 1 has been around since Windows 3.11, version 2 was introduced in Windows Vista/Server 2008, and version 3 with Windows 8/Server 2012.

Generally, SMB traffic is restricted to within an organization’s internal domain. However, once one host is infected with malware taking advantage of ETERNALBLUE (say, with a specially crafted email sent to one of the least technical members of staff), it is then trivial for the infection to spread to the rest of the domain network - particularly if Windows Firewall is completely turned off for the domain as well.

Hardening What We Can

1. Install Updates

The most important piece of advice is the same one everyone else is going to tell you. Critical updates need to be applied as soon as possible. Perhaps not immediately immediately, with MS having previously screwed up some (and of course, even the most robust testing cannot take account of every configuration of hardware and software), but as soon as is feasible - because we’re seeing a much lower time from something going from a leaked or mostly theoretical exploit to being taken advantage of in the wild. (Particularly with things like maliciously crafted Microsoft Office documents).

If you have a WSUS infrastructure, make good use of it. If you do not have a WSUS infrastructure, wave every single article about the spread of the NHS cyberattack to your superiors until you get one. Set a sane update schedule for your environment and work out a plan for getting the most important patches into your organization as soon as you can.

For this particular case, due to the massive spread, MS has released patches for XP. 2003, and Windows 8, which they otherwise no longer support. Machines running these systems (along with Windows Vista, which was in support when the ETERNALBLUE patch was issued, but has now fallen out of support) should install these patches ASAP, but with the understanding that this is a temporary measure; that this specific exploit might be corrected, but these systems remain vulnerable to all sorts of threats (present and future) - and so, if they cannot be removed from the network, they must be as isolated as is humanly possible from every other machine.

There are multiple different ways to query a machine’s OS, but a quick one is to query the AD computer objects in your domain:

Get-ADComputer -Properties operatingsystem -Filter {operatingsystem -like "*2003*"} Get-ADComputer -Properties operatingsystem -Filter {operatingsystem -like "*XP*"} Get-ADComputer -Properties operatingsystem -Filter {operatingsystem -like "*Vista*"} Get-ADComputer -Properties operatingsystem -Filter {operatingsystem -like "* 8 *"} #the spaces need to be here or else it'll get all Server 2008/R2 clients, as well as all Windows 8.1 clients. #You could combine all of these into one longer query or use a foreach, I'm just trying to keep things simple in this case.However, particularly in environments such as the UK’s NHS (with a lot of embedded systems), this perhaps may not tell the full picture - and of course, many of those embedded systems cannot be updated for one reason or another. And this problem isn’t specific to embedded Windows systems either; many Linux embedded systems (which are most likely not on the domain at all) also only implemented SMB1 - and even if these implementations may or may not be vulnerable to ETERNALBLUE, they may very well be vulnerable to something else. Find these hosts using whatever you can and lock down whatever is possible - ensure no internet access and they are cordoned off as much as possible from the rest of the corporate network.

2. Remove SMB1 From Your Environment

Like most things designed during the Windows 3.11 era of computing, security was not hugely at the forefront of the design of the SMB1 protocol. SMB1 is unnecessary for any version of Windows past Vista/Server 2008 to communicate with any other version of Windows past Vista/Server 2008. If a machine in your environment does not need to talk to one of the problem cases listed above (and very few machines in your environment should - if it is a requirement of something relatively cheap for a business to replace, such as printers from 15 years ago that are falling apart, again, use this as an opportunity to push for things), it is worth disabling - even if this specific exploit may now be patched, there will be others in future.

There are several different ways to accomplish this:

a. Deployment Images

If you have a deployment server, disabling SMB1 in your deployment images is an excellent way to make sure no new installation has it. For clients, this can be accomplished by mounting your custom image using

Mount-WindowsImage. The exact parameters you’ll need for this command will vary depending on your environment, so I’d suggest searching for more resources if you’re not sure.If I have my Windows 8/8.1/10 custom image mounted to C:\Mount\, I can then run these commands to disable SMB1 from any future installs from that image.

Get-WindowsOptionalFeature -FeatureName SMB1Protocol -Path C:\Mount | Disable-WindowsOptionalFeature -Path C:\Mount -Force Dismount-WindowsImage -Path C:\Mount -SaveThis solves the problem for new client deployments - however, most organizations are not redeploying all clients overnight. For “legacy” hosts, the registry setting below must be changed instead - which can be done via modifying the image, or…

b. Group Policy (Vista/Win7/Server 2008/Server 2008 R2 Only)

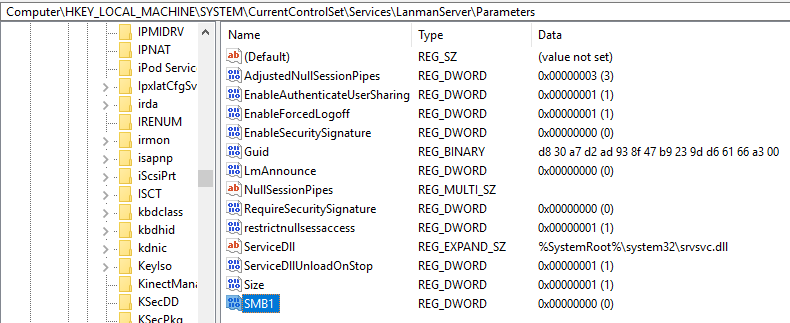

SMB1 is controlled by the following registry key:

HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Services\LanmanServer\Parameters\SMB1Setting this value to 0 disables SMB1. The registry key may still be in use on later versions of Windows, though the MS documentation only mentions it being valid for these.

c. PowerShell - Against Live Systems (Windows 8+/Server 2012+ Only)

There are three lines of code we need to know; two of which can be easily run en masse, and one that is slightly more complicated if you don’t have PowerShell Remoting in your environment.

First,

Set-SmbServerConfiguration -EnableSMB1Protocol $false -Force. This works on both Windows clients and Windows Server. It accepts a-CimSessionparameter, so it can be run against many machines very quickly by setting up a CIMSession (please see MS’s documentation on New-CIMSession if you’re new to CIMSessions).Next,

Uninstall-WindowsFeature FS-SMB1. This only works on Windows Server. It accepts a ComputerName parameter so it can be easily run against remote hosts - however, it can only be run against one at once. Useforeachand a list of server names to run through things quickly - or use Invoke-Command if remoting is available. Note that the uninstall is only completed after the machine is restarted.Finally is a variation on the command we saw above:

Get-WindowsOptionalFeature -FeatureName SMB1Protocol -Online | Disable-WindowsOptionalFeature -Online -Force. This one can only be run on Windows clients (or rather, it can be run on Windows Server, but when I tried it instead of running the command above, the uninstall failed). It supports no remoting capability as part of the cmdlet, so if you have remoting enabled to all clients in your environment, use Invoke-Command, otherwise you’re probably looking at creating a startup script.d. PowerShell - DSC

If you’re already running DSC in your environment, then you may wish to consider adding something like the below to your configuration:

$featuresabsent = @( "SMB1Protocol" "MicrosoftWindowsPowerShellV2Root" #disable PSv2 to prevent exploits - https://www.youtube.com/watch?v=Hhpi3Sp4W4k "MicrosoftWindowsPowerShellV2" ) foreach ($f_a in $featuresabsent) { WindowsOptionalFeature $f_a { Name = "$f_a" Ensure = "Disable" } }This configuration entry is for clients (in fact, it’s a sample for the DSC script I use to configure a new home machine), but it would not be difficult to add for servers - using the WindowsFeature DSC resource instead. If your servers are in push mode, you’d obviously need to push the new config out; if you have a pull server, just wait for them to grab the new information. (Do be aware that this will probably need a restart, so watch out for DSC clients who have auto restart set in their LCM config).

Wrapping Up

Hopefully, some of this has been of use in helping make your network a little more secure, even if you only have limited access to systems.

12 May 2017 >> Sometimes It's The Basics

I’ve been playing around with PowerShell DSC lately, improving on my home lab (although it’s quite limited by the lack of compute resources/decent storage). Today, I wanted to get credential encryption setup. So, I made a helper function to generate the certs, export files and write the certificate thumbprint to the console - of course, in production with an existing AD infrastructure, I’d use ADCS (and one of the purposes of this home lab is to learn how to set it up).

Here is the relevant helper function. Do not copy and paste this yet, for the reason I’m going to explain below:

function GenerateDSCCertificate { [CmdletBinding()] param ( [string]$PublicFilePath = "$psscriptroot\DSCCertficate.cer", [string]$PrivateFilePath = "$psscriptroot\DSCCertificate.pfx", [string]$SubjectName = "DSC Certificate for Home Lab", [Parameter(Mandatory=$true)] [SecureString]$Password ) $WindowsMajorVersion = (Get-CimInstance Win32_OperatingSystem | Select-Object -ExpandProperty Version).Split(".")[0] #New-SelfSignedCertificate was upgraded on Windows 10. if ($WindowsMajorVersion -ge 10) { $cert = New-SelfSignedCertificate -Type DocumentEncryptionCertLegacyCsp -DnsName $SubjectName -HashAlgorithm SHA256 $cert | Export-PfxCertificate -FilePath $PrivateFilePath -Password $Password $cert | Export-Certificate -FilePath $PublicFilePath $cert | Remove-Item -Force #remove the public cert from the local cert store Write-Output $cert.thumbprint } else { Write-Error "Cannot generate SelfSignedCertificate on this version of Windows. Please see this article: https://msdn.microsoft.com/en-us/powershell/dsc/secureMOF" } }Nice and simple. My authoring node is Windows 10, and my target nodes are Windows Server 2012 R2, so I made the decision to use Method 2 (again, it’s a simple home lab), because that would be easier than having to load the New-SelfSignedCertificateEx script onto each node and copy the resulting public certificate back.

So, I then go to run a DSC configuration to generate a MOF. The actual configuration isn’t too relevant, but it does include a component where it needs a domain credential to join the domain if not already joined. Here is the ConfigData block for that configuration.

$ConfigData = @{ AllNodes = @( @{ NodeName = "*" DomainName = "testing.internal.vei.space" DomainNetbiosName = "VEITest" CertificateFile = "$PSScriptRoot\DSCCertificate.cer" Thumbprint = "D5639ADD8A0F1765FB1103D1CDBF02139200443A" PSDSCAllowDomainUser = $true } @{ NodeName = "Management" } ) }Whenever I tried running this configuration, the same thing would happen:

ConvertTo-MOFInstance : System.ArgumentException error processing property 'Password' OF TYPE 'MSFT_Credential': Cannot load encryption certificate. The certificate setting 'C:\Users\Charles\OneDrive\Git\DSC_LabSetup\DSCCertificate.cer' does not represent a valid base-64 encoded certificate, nor does it represent a valid certificate by file, directory, thumbprint, or subject name. At C:\WINDOWS\system32\WindowsPowerShell\v1.0\Modules\PSDesiredStateConfiguration\PSDesiredStateConfiguration.psm1:310 char:13 + ConvertTo-MOFInstance MSFT_Credential $newValue + ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~ + CategoryInfo : InvalidOperation: (:) [Write-Error], InvalidOperationException + FullyQualifiedErrorId : FailToProcessProperty,ConvertTo-MOFInstanceSo, what’s the problem here? Some Googling and Binging gave nothing useful (if you’re here because of a search engine, hello!). It took me some time to figure out the problem (during which I got it working by importing the public certificate into the authoring node cert store and passing the thumbprint instead), but let’s take another look at those filepaths:

[string]$PublicFilePath = "$psscriptroot\DSCCertficate.cer"CertificateFile = "$PSScriptRoot\DSCCertificate.cer"I made a typo when setting up the helper function for the export! 🤦 I only noticed while I was tab-completing the certificate names to copy the PFX to the target node (thanks to the ability of Copy-Item to copy to a PSSession, I didn’t even need to set up a SMB share with custom permissions due to the authoring node being a workgroup machine).

So yeah. Sometimes, it’s the basics. Now I need to go set up a proper ADCS infrastructure and a pull server to make this sort of thing less hassle in the future.

26 Apr 2017 >> Scheduled Job: PowerShell Transcription Logging and OneDrive

Since the option became available, I’ve been using PowerShell transcription logging on my personal devices by setting a local Group Policy. (For more information on it and the other PowerShell related Group Policies, please see this article - particularly if you’re in a domain environment and need to make sure no malicious commands are being run). It’s really useful for being able to go back and search through the commands that I’ve run on any machine (as is Ctrl+R for local history searching).

However, there’s a problem. I had my transcript folder set up as a folder within OneDrive. Since I tend to leave PowerShell sessions open for a long time rather than closing and reopening them, this meant that OneDrive was constantly trying to process a file that it couldn’t touch (because the transcript file is only available to upload after the session it relates to is closed). On my desktop, the impact of this was barely noticeable, but on my laptop, it was causing OneDrive to use a lot of CPU that it didn’t need to, and drain my battery a lot faster than it should have done.

So, a relatively easy fix then:

mkdir C:\PowerShellTranscripts\- Change the Group Policy to point to C:\PowerShellTranscripts\ rather than previous location in OneDrive.

- Create a new scheduled job to move files from C:\PowerShellTranscripts to the location I want them in in OneDrive.

$script = { $TranscriptsPath = "C:\PowerShellTranscripts" $OutputPath = Join-Path -Path $env:OneDrive -ChildPath "Documents\PowerShell\PowerShell Transcripts" $DaysDelay = 3 Get-ChildItem $TranscriptsPath | where LastWriteTime -lt (get-date).AddDays(-$DaysDelay) | Move-Item -Destination $OutputPath } $option = New-ScheduledJobOption -StartIfOnBattery $trigger = New-JobTrigger -Daily -At "8PM" Register-ScheduledJob -Name "Migrate Transcripts" -ScriptBlock $script -MaxResultCount 14 -ScheduledJobOption $OPTION -Trigger $triggerI’m not sure when the

$env:OneDrivevariable was added, but it saves me having to look it up in the registry. (If you’re not on Windows 10, you may need to get this location by runningGet-ItemProperty HKCU:\Software\Microsoft\OneDrive\ | select -ExpandProperty UserFolder).This is a lot easier for OneDrive to deal with, as it’s getting a few KB of files each day at 20:00, rather than having to constantly process.

Going forward, I’ll probably add the C:\PowerShellTranscripts folder and configuring the registry key for transcription logging as part of the scripts I use when setting up a new personal machine. (In an environment where the machine likely has more than one user, you would want to make sure that the C:\PowerShellTranscripts folder is secured as well).

21 Apr 2017 >> Going Forwards

So, my employment was terminated in lieu of notice last week, and I think I’m still coming to terms with that a bit.

The main thing I want to do here is clean up some of my old PowerShell scripts into a better format. (Also, clean up the CSS formatting on the splash page - I am not a web developer by nature!)

- To delete anything that no longer has any relevance.

- To turn the mostly monolithic modules into a much easier to maintain collection of scripts - breaking out each individual function into its own script file, making finding the one I’m looking for to be much easier.

- With ad-hoc scripts that I’ve written, figure out if there’s anything worth keeping and turn that into a function in the module if so.

- To get everything properly version controlled and on GitHub - my old boss didn’t really understand the need for version control, resulting in me bodging something together using scheduled

robocopys. The Head of IT did understand the need for version control, but the idea to get me set up on the TFS server came too close to the hammer falling. - To write tests for all functions using Pester.

- To write help for all functions using PlatyPS.

I want to write at least one post a week, to help keep me focused.

My plans otherwise are to look for a temporary position, and get my home lab set back up so I can take the 70-411 somewhere around my birthday. Ideally, I also want to use this as a learning opportunity for using PowerShell DSC - I already have basic domain setup scripts, though they need cleaning up. I’d also like to use a Nano Server trial as a DSC pull server.

The main problem is that I’m severely struggling for local storage at the moment, so getting that expanded is priority one - I want to be very sparing if I end up using Azure VMs for this task (though of course, learning more about Azure wouldn’t hurt either - except my wallet, and I need to be sparing with that until I find something else).

So yeah, if you’re in the UK, in the Barnsley/Sheffield/Wakefield kind of area and looking for an experienced Service Desk Engineer (or Junior Infrastructure Technician) who’s pretty decent at scripting things, let me know.

31 Mar 2017 >> Quickly Licensing Exchange Online Users using PowerShell

So here’s something that might be useful for other admins looking to move to Exchange Online - particularly if you’re used to an on-premises environment, as I was.

To be fully set up for Exchange Online, a user needs a few things:

- They need a -UsageLocation set.

- They need a license assigning.

- If you’re operating in a hybrid environment with Exchange 2010/2013, the mailbox needs migrating (this post will not cover that part). If you’re not in a hybrid environment, assigning the license is enough to create the mailbox.

- For the user to be able to log in, they need their regional configuration (time zone) setting.

- In our case, I also needed to double check the domain used for the UserPrincipalName - a lot of our users were set up with a UPN matching our internal domain, when ideally they should login to Office 365 with the external domain that they’re familiar with.

Prerequisites

The most important thing here is the Azure Active Directory cmdlets for PowerShell, downloadable from here. We will be using the v1 cmdlets for this post, as I have not yet played around with the v2 ones. (The V1 cmdlets are also referred to as the Microsoft Online Services cmdlets - hence the MSOL prefix they use).

This post assumes that you have user accounts synchronized from your local AD instance, because that’s the scenario I’m familiar with. It also assumes your other domains have been set up properly in Office 365 and local AD, and that you have purchased the appropriate amount of licenses.

The User Principal Name

This isn’t mandatory, but does make it easier for users. The company I work for has several trading names, and an internal domain that does not match any of those trading names. Let’s call the internal domain internal.net, and the domains we actually trade on as companyA.com, companyB.com.

Side note: please don’t visit any of these websites; they are being used as examples in place of real names and I cannot speak to the contents of them.

When users give out their email address, it ends in CompanyA.com or CompanyB.com. We set this up using an SMTP alias, tied to the Company attribute in AD. So using that same attribute, we can rewrite the user’s UPN to match the domain they’d expect to see.

Fixing this only requires the regular Active Directory cmdlets.

$users = Get-ADUser -SearchBase "OU=Users,OU=Testing,DC=internal,DC=net" -Filter * -Properties Company foreach ($user in $users) { Write-Verbose "Looking at $($user.UserPrincipalName)" $upnsplit = $user.UserPrincipalName.Split("@") switch ($user.Company) { "Company A" {$upnsuffix = "companya.com"} "Company B" {$upnsuffix = "companyb.com"} default { Write-Warning "$($user.UserPrincipalName) potentially has incorrect Company information." $upnsuffix = $upnsplit[1] } Write-Verbose "Setting $($user.UserPrincipalName) to $($upnsplit[0])@$upnsuffix" $user | Set-ADUser -UserPrincipalName "$($upnsplit[0])@$upnsuffix" } }Wait for a dirsync, and users will now have a login name that matches their expected domain. If the Company information does not match Company A or Company B (as was the case for a lot of service accounts we used, where they would only ever mail internally), it will set the UserPrincipalName to what it originally was.

The Usage Location

The UsageLocation is how MS determines if a license can be applied to the account - as they cannot legally operate some Office 365 services in some territories.

If all of your users are in the same country, this is incredibly easy. If not already done, run

Connect-MSOLServiceto connect PowerShell to your Office 365 instance. Then run:Get-MSOLUser -All | Set-MSOLUser -UsageLocation GBYou’re done. (See ISO 3166-1 alpha-2 for the full list of country codes and why you should use GB rather than UK).

You can pass normal AD users to the Set-MSOLUser command, which can be useful if most of your users are located in one country, but a group in their own OU is located in a different one.

The License

After setting the user location, you can now assign a license. Run

Get-MSOLAccountSKUto get the list of available licenses in your Office 365 tenant. Copy the relevant AccountSkuID and use it in the following commands. We’ll usecontoso:EXCHANGESTANDARDas an example.If you only have one type of license to assign, and you want to assign it to all accounts that do not currently have a license, the easiest way is with:

Get-MsolUser -LicenseReconciliationNeededOnly | Set-MsolUserLicense -AddLicenses 'contoso:EXCHANGESTANDARD'or

Get-MsolUser -UnlicensedUsersOnly | Set-MsolUserLicense -AddLicenses 'contoso:EXCHANGESTANDARD'Use

-LicenseReconciliationNeededOnlyif you’re migrating users - it’ll show those that have been migrated without a license assigned. Use-UnlicensedUsersOnlyotherwise - but be careful, especially in a hybrid environment, as this may show a lot of users that don’t need licenses (users who have long since left the business; shared mailboxes that were set up as “User” mailboxes on-prem rather than “Shared” - if converted to a shared mailbox, they won’t need a license if they use under 50GB of space).To be more specific about license assignment, you can leverage your existing AD groups. For example:

Get-ADGroupMember "Branch Users" | Get-MSOLUser | Set-MsolUserLicense -AddLicenses 'contoso:EXCHANGESTANDARD'You can also write a script to make sure they don’t already have a license before assigning one, I might cover the one I wrote later.

The Regional Configuration

If all of your users access their email via Outlook on their desktop, then you’re pretty much done on the administration side after they’ve been migrated. However, if you have a large quantity of users reliant on Outlook Web Access, as we do, then not doing this can result in some unnecessary support calls.

When a user logs in for the first time to OWA for Office 365, they are prompted to set up their location and time zone settings. The location should be pre-filled in based on the UsageLocation above, but the time zone can be more confusing to users - we had a quantity that selected the first option on the list (Dateline Standard Time) rather than the GMT option, and then called to ask why the time was wrong on their emails.

To prevent this, we can use the

Set-MailboxRegionalConfigurationcommand. This is part of the Exchange Online cmdlets rather than the MSOL ones, so we’ll need to import the Exchange Online cmdlets first:try {Get-Command Get-Mailbox -ErrorAction Stop | Out-Null} catch { $Credential = Get-Credential -Message "Please enter your credentials for Exchange Online." $splat = @{ ConfigurationName = "Microsoft.Exchange" ConnectionURI = "https://outlook.office365.com/powershell-liveid/" Credential = $Credential Authentication = "Basic" AllowRedirection = $true } $Session = New-PSSession @splat Import-PSSession $Session -DisableNameChecking }(if you’re not familiar with the @splat syntax used, see here)

Now, we’ll get the details for someone who did it properly:

Get-Mailbox GoodUser | Get-MailboxRegionalConfiguration Identity Language DateFormat TimeFormat TimeZone -------- -------- ---------- ---------- -------- GoodUser en-GB dd/MM/yyyy HH:mm GMT Standard TimeAnd use that to fix everyone who didn’t - or hasn’t logged in yet.

$timezone = "GMT Standard Time" Get-Mailbox | Get-MailboxRegionalConfiguration | where TimeZone -ne $timezone | Set-MailboxRegionalConfiguration -TimeZone $timezone(It goes without saying, but be careful about international users; filter them out as close to the left side of the pipeline as is possible)

15 Feb 2017 >> On Crowdfunding

So, as you know if you follow me on Twitter, I back a lot of Kickstarters (so much so that Kickstarter has branded me a ~superbacker~. I believe it’s really important to support independent artists, and that’s the biggest way I do so - along with monthly Patreon payments, and commissions from my furry accounts.

So here’s a post I’ve been meaning to write about what will get me to back a project or not.

Small Teams

I prefer to back games made by smaller teams. This means I’m less likely to back if there’s a heavy external developer/publisher presence - not just as help for creating physical boxes, but throughout. I did not back Shenmue 3 because I felt that Sony could have easily funded that themselves. I did not back Mighty No. 9 for various reasons, but the lack of transparency over Deep Silver’s involvement was one of them.

Amplitude was one exception, as I felt that Sony would never back that, it was Harmonix fighting for the right to use the name.

I don’t have a big problem if a partner gets picked up after the crowdfunding campaign finishes - for a recent example, Frog Fractions 2 getting some additional assistance from Adult Swim Games.

UK Shipping

If UK shipping on your product is half the price of the thing, chances are I’m not backing it - or at least, not at a tier without digital rewards. I would have loved Fidget Cube, but IIRC, UK shipping was very expensive compared to the price of the project. When I first saw the Voyager Golden Record replica, it did not have the digital download tiers - and again, UK shipping was really expensive.

Hierarchy of Sites

I trust a crowdfunding attempt on Kickstarter more than I trust one on Indiegogo - due to no flexible funding, and hardware projects requiring a working prototype.

Fig is an odd one. My stance on Fig is to look at the ratio of backers to investors. For example, looking at the current project at time of writing, “Little Bug”:

What this tells me is that the majority of people interested in this game have dollar signs in their eyes rather than actually wanting the game. I can’t see this providing a good return on investment, because if no one wants to pay for the game itself while crowdfunding…

Electronics

I don’t back robots, security products, wireless earbuds with voice commands/translation features, or any electronics on Indiegogo (see above). At best, these products are snake oil. At worst, they are actively transmitting any data you give them to a country where you don’t particularly want your data going.

With robot crowdfunding pitches, most of them promise the world with things they can’t possibly deliver, and are also no match for stairs, and would probably be savagely attacked by my two dogs.

As for wireless earbuds, for starters I’m going to lose those - but also, for the ones offering translation features, they’re just connecting to an app on your phone, and the videos usually edit out the few seconds while the app processes. Since it’s not seamless, you may as well be using the app on your phone anyway to communicate.

Text and Images

I am very unlikely to watch your video. I will only watch your video if your campaign text is interesting (either in a good sense or a bad one), but there’s something I’m missing. In most cases, I’m scrolling through your page, and I’ve decided if I’m backing or not from that alone.

This doesn’t mean the video isn’t important. I choose my way, others choose the opposite.

Direct Marketing

If you @ -me on Twitter with a link to your campaign because you were randomly searching for the word “Kickstarter”, well… I’m less harsh than some people, I actually usually will check out your campaign, but you’re starting with negative a few points towards me actually backing, thanks to the minor irritation. If your project is good, you shouldn’t need that, I should hear about it through friends or the other people I follow.

So yeah, those are my basic thoughts on backing. One more: for games, I expect the project to get delayed - but I’ve never backed one that got cancelled on me yet.

07 Jan 2017 >> Don't Fear the Registry

I’m not a fan of some of the advice about the registry you see online. You know the type.

⚠ You should only view the registry exactly as described in this guide! Your computer may explode if you even as much as think about opening REGEDIT without clear direction!

I mean, you should not go in and modify things willy-nilly, that much is common sense. But this level of warning discourages even the simplest exploration into it - to stop people even looking for solutions to problems in there without being told.

I follow the “look, don’t touch (usually)” principle - after all, no registry entry should change simply by observing them in REGEDIT or PowerShell (and any that do change on observation have a decent chance of being malware).

I use OneDrive for sharing files between my desktop and my Surface 3, and editing things like this from anywhere. My OneDrive folder on my desktop is on my large media drive (M:\OneDrive), while on my Surface, it’s in the default location of

~\OneDrive- as OneDrive can only be placed on an NTFS drive, and the micro SD card in the Surface 3 is FAT.If I need to use a file path in a script that is intended for personal use only, I don’t know which device I’m on. I don’t particularly like relative paths in scripts if the relative path isn’t in the same folder as the script (or a subdirectory). I don’t want to create a fake M:\ drive on my Surface, or create a symlink from ~\Desktop on my desktop.

OneDrive folders are user specific, so I know that I’m looking in

HKEY_CURRENT_USER. PowerShell makes it pretty easy to locate the registry entry I’m looking for:Set-Location HKCU:\ Get-ChildItem *OneDrive* -Recurse Hive: HKEY_CURRENT_USER\SOFTWARE\Microsoft Name Property ---- -------- OneDrive EnableDownlevelInstallOnBluePlus : 0 # output snipped UserFolder : C:\Users\Charles\OneDriveSo now I know I have a consistent place to look for where the OneDrive folder actually is. I can wrap a helper function around that, put that in a module, and boom, all I need to do is call

Get-OneDrivePathwhen I want to know where to put something in PowerShell - no matter which machine I’m using.07 Dec 2016 >> Putting the Powers in PowerShell

So, up until this week, one of my defencencies in PowerShell was using powers of numbers. Finding that the

^operator did not work, when I needed to square numbers, I would do things like the below:$variable * $variableFor anything more complex, it’d inevitably be back to my old friend Excel.

To give the answer away early, the answer is in PowerShell’s

[math]type. We can get a list of methods available in this type by using[math] | Get-Member -StaticPS C:\> [math] | Get-Member -Static TypeName: System.Math Name MemberType Definition ---- ---------- ---------- Abs Method static sbyte Abs(sbyte value), static int16 Abs(int16 value), static int Abs(int value), ... Acos Method static double Acos(double d) Asin Method static double Asin(double d) Atan Method static double Atan(double d) ...Specifically, the answer lies in the

[math]::Pow(x,y)method - translating to the standard xy notation.PS C:\> [math]::pow(5,3) 125Simple. That’s all you need to do. If you want to see what got me to learn this, read on.

Like a lot of PowerShell’s more under-the-hood features, I finally found the answer for this when trying to do something silly with a video game.

The Scenario

The latest Pokémon games - Pokémon Sun & Moon - contain a feature known as “Poké Pelago”, which allows you to train your Pokémon when not playing the game - at a rate of approximately 300 experience points per 30 minutes (which can be sped up to 15 minutes with in-game items).

I have a Pokémon in the “Slow” experience group. They are currently at level 40 (

$CurrentLevel). At level 80 ($TargetLevel), they learn a useful move. There are ways to make them learn this move earlier, but how long would it take to use Poké Pelago to go from$CurrentLevelto$TargetLevel?Tasks

- Find the amount of experience points that correspond with

$CurrentLevel($CurrentExp). - Find the amount of experience points that correspond with

$TargetLevel($TargetExp). - Subtract

$CurrentExpfrom $TargetExp, divide the result by 300 to get the number of Poké Pelago sessions. ($Sessions) - Use

New-TimeSpanto translate$Sessionsinto the amount of time it will take.

1 and 2 on this list are repeated work, and so should be made into a function.

The formula for calculating “Slow” experience points is given in the Bulbapedia article above - being 5n3, where n is the Pokémon’s level.

The Script

function Get-PokémonSlowExperiencePoints { param( [int]$Level ) 5 * [math]::pow($level,3) }(side note: I’m cutting some corners here, but if you’re writing functions for a (primarily) English language audience, you should always include an alias if you want to use a character like

éin your function name. Otherwise, someone is going to typeGet-Pokeand wonder why tab completion doesn’t work).With the function above defined, the rest of the script becomes very easy to write:

$CurrentLevel = 40 $TargetLevel = 80 $CurrentExp = Get-PokémonSlowExperiencePoints -Level $CurrentLevel $TargetExp = Get-PokémonSlowExperiencePoints -Level $TargetLevel $Sessions = ($TargetExp - $CurrentExp) / 300 Write-Warning "Without Poké Beans (1 session = 30 minutes)" New-TimeSpan -Minutes (30 * $Sessions) Write-Warning "With Poké Beans (1 session = 15 minutes)" New-TimeSpan -Minutes (15 * $Sessions)Which gives the output below:

WARNING: Without Poké Beans (1 session = 30 minutes) Days : 155 Hours : 13 Minutes : 20 Seconds : 0 Milliseconds : 0 Ticks : 134400000000000 TotalDays : 155.555555555556 TotalHours : 3733.33333333333 TotalMinutes : 224000 TotalSeconds : 13440000 TotalMilliseconds : 13440000000 WARNING: With Poké Beans (1 session = 15 minutes) Days : 77 Hours : 18 Minutes : 40 Seconds : 0 Milliseconds : 0 Ticks : 67200000000000 TotalDays : 77.7777777777778 TotalHours : 1866.66666666667 TotalMinutes : 112000 TotalSeconds : 6720000 TotalMilliseconds : 6720000000So yes, it is not worth training my Pokémon in this way - particularly as the game only allows you to queue up 99 Poké Pelago sessions at once, and it would take 7466.66667.

If I wanted to, I could turn this script into a function, and/or write a more advanced helper function that accounts for the other kind of experience groups and perhaps takes pipeline input. Doing these is left as an exercise to the reader - this was just a quick script to demonstrate to myself that it would not be worth trying to use Poké Pelago in this way.

Since initially writing this article, an exploit has been discovered in the game that causes Poké Pelago sessions to complete instantly, thus making the venture more worthwhile.

- Find the amount of experience points that correspond with

05 Oct 2016 >> My Philosophy

I’ve been reading Derren Brown’s Happy recently, an exploration of philosophy and how it can help us. At the time of writing, I am only part way through, but I still wish to share mine.

My philosophy in life is fairly simple, accrued from a mismatch of Western philosophers, television shows, and The Ethical Slut, which is genuinely one of the most transformative books I’ve read when it comes to my personal philosophy, and something I would recommend in a heartbeat to anyone who doesn’t mind descriptions of non-traditional forms of love:

The world is a harsh, unforgiving place. Do what you can to make things a little brighter for people. Love others cleanly, openly, and honestly, and try to act in a safe, sane and consensual manner.

Whether you wield the influence to change many lives, or if you just have the means to help friends in need, the scale doesn’t matter, as long as you help someone.

I hung around a community of people that bullied people like me for years, trying to hide, feigning that I was one of Us instead of one of Them. Because I was one of Them, I assumed that no one would ever love me if they found out what I was really like - possibly the most incorrect assumption I will ever make. I swore that I will never lead others to make the same mistake.

This also ties into my views on the afterlife. I’m agnostic, and while I do want to believe that my friends and family who have passed are in a better place, my honest opinion is that no one can know the truth until the point in which they die.

One episode of the British sci-fi series Red Dwarf has always stuck with me.

In it, the crew face The Inquisitor, an entity who goes through time, and judges if each person has lived the best life they can - and if not, erases them from history and replaces them with a person that could have lived in their place. However, he does not judge everyone objectively - with the heart weighed against a feather, or St. Peter’s checklist of good and bad deeds. Everyone is judged subjectively, by their own self.

(These two pass the test, while Kryten, the android, fails because he does not acknowledge that he has grown beyond his programming, and Dave Lister, the drifter, feels that the Inquisitor does not have the right to judge)

That is the test which I think about passing - particularly because it’s similar to the questions I would ask myself when the anxiety got particularly bad. But it is a test I’m happy to say that I would now pass by my own standards. There might be a version of me that is far more accomplished in their career, but is also a complete arsehole - and I would say that I’ve made a far better use of my life than that.

Next time, something technical for once.

26 Sep 2016 >> An Intro

So, this is something I’ve wanted to do for a while.

This is vei.space, a place to share my thoughts about personal issues and help with technical things. Because while I use my Tumblr a lot, it’s mainly for reblogging content other people have made, rather than sharing my own. My objective here is to write at least one blog post a month. Not a huge one, just… something.

My first planned post is some general notes on my philosophy in life, before I move onto the more technical challenges I’ve been facing recently.